The First Fissures: AI Data Center Financing Is Starting to Crack

Last week, Blue Owl Capital tried to syndicate $4 billion in debt for a CoreWeave data center in Lancaster, Pennsylvania. Lenders passed. "We saw it. We passed," a senior executive at one specialty lender told Business Insider.

The same week, Blue Owl permanently halted quarterly redemptions from one of its retail private credit funds, sold $1.4 billion in loans across three vehicles, and began what analysts are calling an "orderly liquidation" of the fund. Redemption requests had been surging — hitting 15% of net asset value at one of its tech-focused vehicles.

A day later, CoreWeave's stock dropped 12%.

The same firm navigating a complex syndication environment is simultaneously managing liquidity by gating redemptions in a specific retail vehicle. While this ensures an orderly liquidation for that fund, it highlights the growing pains of matching retail liquidity with the long-term capital demands of AI infrastructure.

And Blue Owl isn't a marginal player. This is the firm that structured Meta's $27.3 billion Hyperion data center deal. They manage $295 billion in assets. If Blue Owl is having trouble finding lenders for AI infrastructure debt, it's not because they don't know how to work the phones.

This follows reports that banks faced pricing headwinds when syndicating $38 billion in debt for an Oracle data center campus. Oracle’s subsequent announcement to raise $50 billion through equity and bonds suggests a deliberate pivot to preserve its investment-grade rating, rather than relying solely on the increasingly expensive private credit market.

I've spent the last several months digging into the financing structures behind the AI data center buildout. What I found is a market where the macro numbers have gone from big to staggering, the capital structures are getting more exotic, and the gap between AI hype and credit reality is widening. Blue Owl could be the first indication of what’s to come.

The numbers

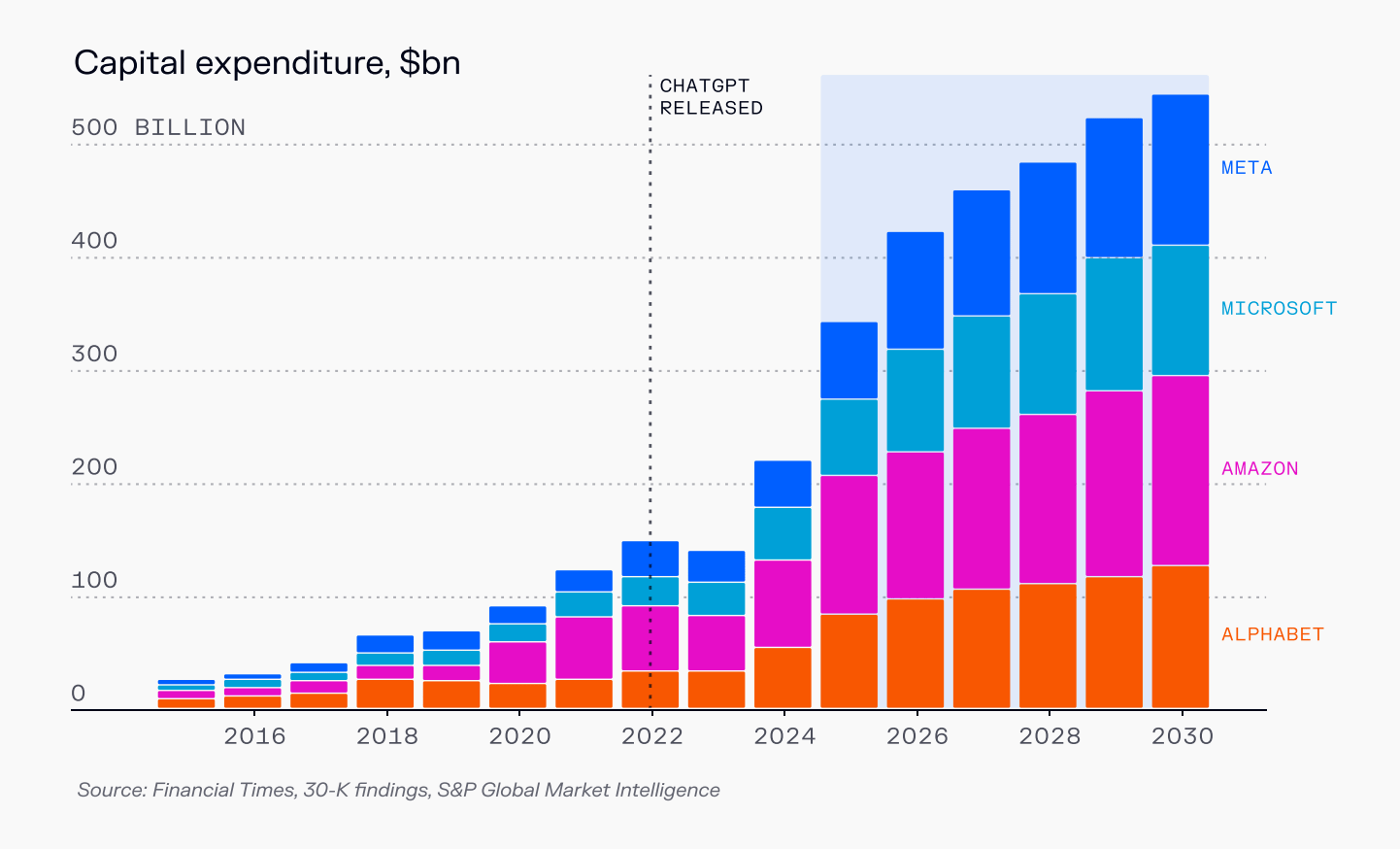

By Q2 2025, the hyperscalers were approaching $100 billion of capital expenditures per quarter on compute. Meta, Microsoft, Alphabet, Amazon, Oracle — the combined run-rate is now approaching $400 billion annually, overwhelmingly skewed toward AI and data center infrastructure.

Projected AI capital expenditures will push overall capex to roughly $1.3 trillion by 2027. McKinsey estimates that global data center spending driven by AI could reach $3.7–7.9 trillion cumulatively from 2025–2030, with a base case of roughly $5.2 trillion.

To put that in context: $5.2 trillion would represent approximately 17% of US GDP over that period — more than 2x the percentage relative to the dot-com boom. Justifying that spend would require roughly 4.4% annual GDP growth against an expected baseline of 3.2%. Since 1960, only 16 years have delivered that kind of growth.

For most of the last decade, this was funded the simple way: out of the hyperscalers' own balance sheets. They used operating cash flow and unsecured corporate bonds to build shells and buy servers, and if they overbuilt, they ate it.

Now something different is happening. Secured debt issuance linked to data centers has exploded — from roughly $1 billion in 2022 to an estimated $25 billion or more in 2025. Microsoft alone carries north of $180 billion in total lease obligations. Meta's Hyperion project issued more than $27 billion of project-style secured debt. CoreWeave raised roughly $10–15 billion in high-coupon private credit backed by GPU racks and a handful of contracts. Oracle is leaning hard on debt to expand its AI cloud footprint.

We're financing the AI buildout with increasingly exotic capital structures — and the first stress tests are failing.

The mismatch at the core

A modern AI data center is not "a bunch of GPUs in a warehouse." GPUs and accelerators represent roughly 35–40% of total project capex. The rest is servers, networking, storage, power distribution, cooling, electrical systems, land, shell, and fit-out.

The problem isn't that GPUs are 90% of the cost. The problem is that they are the single largest line item and they age very differently from everything else.

Shells and power infrastructure are multi-decade assets. A well-sited campus with grid interconnect and substations can be useful for 20–30 years. You can change tenants. You can change what runs in the racks.

GPUs are not like that. Physically, a data center GPU can run for 5–8 years. Economically, at the frontier, it's more like 3–5 years before the next generation is 2–3x better on performance per watt. Operators like CoreWeave target roughly 2.5-year cash payback on GPU-heavy cohorts, because they know that's the window where the asset is most valuable.

Long-lived shells and power, financed over 10–20 years. Shorter-lived GPUs inside them, with 3–5 years of meaningful economic life. That mismatch is the fault line.

Two models, two risk profiles

Hyperion (Meta/Blue Owl): The clean structure. The project debt does not finance GPUs. Meta buys the GPUs itself and absorbs the entire replacement-cycle risk on its own balance sheet. The $27.3 billion in senior secured notes (A+ rated, ~6.6% coupon) finance the campus: land, buildings, substations, power, cooling. Meta backs long-term leases with "hell-or-high-water" style payment commitments. If the GPU cycle accelerates or the economics disappoint, Meta equity eats that. Hyperion bondholders do not suddenly own a pile of obsolete H100s.

That said, Hyperion isn't risk-free. Rising power prices push operating costs up over time. And in a global overbuild of AI capacity, a 2–5 GW campus doesn't have an easy "Plan B" tenant. Meta does not sell compute. If all the hyperscalers have excess capacity at once, re-tenanting is harder than it looks on a slide deck.

CoreWeave: The levered GPU play. CoreWeave is almost pure exposure to GPU economics. It rents capacity to customers like Microsoft, OpenAI, and Nvidia. On the liability side, CoreWeave has raised multiple large private-credit facilities: a roughly $2.3 billion GPU-backed loan, a $7.5+ billion delayed-draw term loan, and another $2.6 billion facility linked to a long-term OpenAI contract. Most of this is held in SPVs that own pools of GPUs. The cost of capital is often in the low-teens all-in. The collateral is physical GPUs, with loans sized at roughly 70–80% of purchase cost, plus assigned contracts with a small set of very large customers.

This is the structure that lenders scrutinized in Lancaster. While CoreWeave’s B+ credit rating reflects its aggressive leverage, it sits against a massive, multi-billion dollar revenue backlog. The friction in Lancaster isn't necessarily a lack of collateral, but a debate over how to price risk in a company that is outgrowing the capacity of traditional syndicates.

The unit economics of the GPU debt stack

Take an H100. All-in capex per GPU, including its share of the server, is about $40,000. Assume a 2.5-year payback target. At 70% utilization, that's about 15,300 "on" hours over the payback window. To earn back $40,000, you need roughly $2.60 in capex recovery per GPU-hour.

Layer on power, cooling, lease costs, and operations — roughly another $1.00–1.50 per GPU-hour for a heavily loaded cluster in a modern facility, with upward pressure as power prices and grid upgrades get priced in.

All-in cost: roughly $3.50–4.00 per GPU-hour today, trending higher.

If you're paying CoreWeave around $3.00–4.00 per hour, can you make money? It depends entirely on what you're selling. And this is where the market has bifurcated into two very different realities.

The bifurcation: commodity trap vs. frontier economics

Reality 1: The commodity trap. If you're renting H100s to serve open-weights models like Llama, you're currently underwater. An H100 can generate on the order of a couple million tokens per hour on optimized settings, but the market price for those tokens has collapsed. At roughly $0.80 per million tokens, that's about $1.60 of revenue per GPU-hour — against a cost floor of $3.50–4.00. Negative gross margin before you've paid for engineering, go-to-market, or support.

Reality 2: The frontier play. The math changes if you're a hyperscaler or frontier lab using compute to train the next model. These customers can plausibly afford $3–4 per GPU-hour, but there are limited buyers training frontier models.

The middle of the market is under significant pressure. Without a proprietary model or a vertical SaaS moat, generic GPU renters are finding it harder to compete with hyperscale pricing. Unless these providers pivot to specialized inference or sovereign cloud niche markets, they risk being stuck in a commodity trap.

Micro solvency vs. system-level returns

The fact that a single GPU cohort can pencil under current conditions does not mean the entire sector's planned capex can be justified on the same terms.

If roughly 40% of that $5.2 trillion base case goes into GPUs and fast-depreciating IT, that's roughly $2 trillion of GPU capex — on the order of fifty million-plus high-end accelerators installed globally. Plug in the per-GPU assumptions and you arrive at implied GPU rental spend in the trillions. At that point you need to believe there are multiple Microsoft-sized AI businesses waiting to be built.

The McKinsey base case requires the equivalent of 16 years of above-trend GDP growth compressed into five years. That's not impossible. But it's a bet, not a baseline.

What Blue Owl is telling us

Go back to the two stories from last week. Blue Owl couldn't syndicate CoreWeave's Lancaster debt because lenders looked at a B+ credit rating, a company burning through billions in high-interest debt to fuel growth, and a $500 million bridge loan coming due in March — and decided the risk-reward didn't work. Simultaneously, Blue Owl's own investors were demanding their money back at rates that exceeded the fund's ability to generate liquidity from its illiquid loan book.

BMO Capital Markets analyst Brennan Hawken said it plainly: "If there is a struggle to find the debt financing, that's a bit of a red flag."

CNBC called it "a canary in the coal mine."

These are not the words you hear when the market is functioning normally. This is what the early stages of a repricing look like — not a crash, but a gradual realization that the credit assumptions underlying the buildout don't hold for every player.

CoreWeave-style structures work if and only if their book is dominated by frontier customers — the Microsofts and OpenAIs who use chips to drive high-value products or training runs. They become incredibly fragile if the market relies on commodity renters to soak up excess capacity.

The fragility points: concentration risk (if Microsoft or OpenAI pull back, there is no profitable middle class to absorb the chips), the economic window of the chip (can lenders get repaid before the H100 becomes second-tier?), utilization sensitivity (the model breaks fast if 70% drops to 50%), and the subsidy curve (a lot of today's AI usage is still funded by promotional credits, investor dollars, or big tech accepting near-term losses for strategic positioning — if that flattens, demand for expensive rented GPUs falls faster than the debt amortization schedule).

Bottom line

I remain long-term bullish on AI and data center demand. There is a credible path to more models, more inference, more latency-sensitive workloads. Many of the shells and substations being built today will be useful for decades.

But bullishness on the technology is not the same as bullishness on every financing structure built on top of it. The market is beginning to distinguish between the two — and Blue Owl's week is what that process looks like in real time.

If something pops, it won't look like 2008. It will look like private-credit funds taking painful write-downs on GPU-backed loans, neoclouds getting restructured or sold for a fraction of invested capital, and a realization that while the technology works, reselling raw compute without a proprietary model or product is a dead end.

The bubble isn't in AI. It's in the assumption that commodity GPU rental is a business. The frontier players will be fine. The middle class of renters is already dead. And the lenders who financed them are starting to figure that out.

Blue Owl just showed us what "starting to figure it out" looks like.

Next: Oracle's $131 billion balance sheet and what it means for AI infrastructure risk.